Maxime Sabbah

| CV |

Email |

Google Scholar |

| Github |

LinkedIn |

|

I am a Post-Doctoral Researcher in the Gepetto Team at LAAS-CNRS, Toulouse, France, working with Prof. Nicolas Mansard.

I obtained a PhD degree in Robotics, working in the Gepetto Team at LAAS-CNRS, Toulouse, France under the joint supervision of Prof Vincent Bonnet and Prof Bruno Watier. I received my Master's degree in aeronautics from ISAE-SUPAERO. I also received an MSc degree in Biomedical Engineering from Imperial College London, advised by Prof Etienne Burdet and Dr Sajeeva Abeywardena .

Goal: Foster fluid and natural human-robot collaborations (physical).

Focus: How to give robots a good understanding of how human moves to unlock human-level assistance and intelligence? How to make robots perform useful tasks with adaptability, generalizability, agility, and safety?

Method: From a model-based and optimization background, now developing learning approaches (VLAs).

Email: msabbah@laas.fr, maxime.sabbah@hotmail.fr

|

- [05/12/2025] Our work entitled Optimal Motion Prediction for Human-to-Robot Handovers has been selected as a Best Conference Paper Award Finalist at IEEE ROBIO 2025 !

- [05/11/2025] Sucessfully defended my PhD thesis at LAAS-CNRS, Toulouse, France. Jury members: Prof. Nicolas Mansard (examiner), Prof. Jan Babic (reviewer), Prof. Sylvain Calinon (reviewer), Dr. Pauline Maurice (examiner)

- [11/2024] 2 months Visiting Researcher in the CLeAR team at School of Computing, National University of Singapore !

- [05/2024] Participation at the workshop "Human Modelling in Physical Human-Robot Interaction" at ICRA 2024, Yokohama, Japan !

- [05/2024] Invited talk at CNRS-AIST JRL(Joint Robotics Laboratory), Tsukuba, Japan .

|

|

COMFI: A Multimodal Industrial Human Motion Dataset for Markerless Motion Capture and Collaborative Robotics

Kahina Chalabi, Maxime Sabbah, Nicolas Gouget, Mohamed Adjel, Guilhem Saurel, Krzysztof Wojciechowski, Bruno Watier, Vincent Bonnet

Submitted to International Journal of Robotics Research (IJRR) 2025

webpage |

pdf |

abstract |

bibtex |

HAL

COMFI (human-robot Collaboration Oriented Markerless For Industry) is a multimodal dataset designed to advance markerless motion capture, ergonomics, and Human–Robot Collaboration (HRC) in factory settings. COMFI contains 4.5 hours of synchronized and spatially co-registered streams acquired from 18 participants performing 24 tasks that span everyday movements (e.g., walking, sit-to-stand) and ergonomically demanding industrial actions (lifting, overhead work, bolting, sanding, welding), with the addition of two HRC scenarios in which a Franka Emika Panda is guided by the human while holding a tool. For a total of 86.5Go of data, it includes: calibrated multi-view RGB videos (40Hz), optical motion capture markers and joint centers positions, as well as joint angles (100 and 40Hz), 6D ground-reaction forces (1000 and 40Hz), and robot telemetry (200 and 40Hz). Camera intrinsics/extrinsics, global triggers, and software-barrier synchronization for webcams are distributed, along with participant-scaled human Universal Robot Description Files that adhere to International Society of Biomechancis conventions, enabling kinematics, dynamics, and torque estimation. Videos are anonymized while preserving facial cues useful to markerless pipelines. Accompanying code supports loading, calibration, and visualization. COMFI enables rigorous benchmarking of markerless pose estimation under occlusion and clutter against reference systems, allowing to extend current state-of-the-art algorithms to complex industrial scenarios. COMFI is expected to catalyze reproducible, cross-disciplinary research toward safer, more ergonomic HRC.

@unpublished{chalabi2025comfi,

author={Chalabi, Kahina and Sabbah, Maxime and Gouget, Nicolas and Adjel, Mohamed and Saurel, Guilhem and Wojciechowski, Krzysztof and Watier, Bruno and Bonnet, Vincent},

note = {Submitted to International Journal of Robotics Research ({IJRR})},

title={{COMFI}: A Multimodal Industrial Human Motion Dataset for Markerless Motion Capture and Collaborative Robotics},

year={2025}

}

|

|

|

Minimal Observations Inverse Reinforcement Learning for Predicting Human Box-Lifting Motions

Maxime Sabbah, Filip Becanovic , Sarmad Mehrdad, Ludovic Righetti, Bruno Watier, Vincent Bonnet

IEEE-RAS 24th International Conference on Humanoid Robots (Humanoids) 2025, (Poster)

webpage |

pdf |

abstract |

bibtex |

HAL

Heavy-load manual lifting poses a significant risk of injury, motivating the need for personalized robotic assistance. The Minimal Observations Inverse Reinforcement Learning (MO-IRL) algorithm has recently demonstrated strong capabilities in recovering underlying optimality principles from very few demonstrations of simulated robotic motions, and at a very reasonable computational cost. Building on this, the present study integrates ten biomechanically informed cost functions into a direct optimal control formulation to predict human motion during heavy-load manual box-lifting tasks. Contrary to previous literature, thanks to the computational efficiency of MO-IRL, we allow time-varying optimal weights and include a collision-avoidance constraint within the set of cost functions. This constraint represents the subject's apprehension of hitting the target table, As MO-IRL requires careful tuning of multiple hyperparameters, we employ a grid search to identify the optimal set. With this configuration, the predicted motion achieves an average accuracy of 11.5 ± 6.2deg across all joint angles, outperforming comparable methods. The inferred cost weights reveal a time-varying control strategy: initially minimizing lower-limb torques, then smoothing the motion through reduced joint accelerations and load velocity, and finally adjusting to avoid table collision. These findings show that biomechanically guided MO-IRL, coupled with direct optimal control, can accurately recover complex, constrained lifting motions while providing interpretable insights into human motor objectives, paving the way for adaptive and userspecific robotic assistance.

@inproceedings{sabbah2025lifting,

TITLE = {{Minimal Observations Inverse Reinforcement Learning for Predicting Human Box-Lifting Motions}},

AUTHOR = {Sabbah, Maxime and Be{\v c}anovi{\'c}, Filip and Mehrdad, Sarmad and Righetti, Ludovic and Watier, Bruno and Bonnet, Vincent},

URL = {https://hal.science/hal-05191852},

BOOKTITLE = {{IEEE International Conference on Humanoid Robots (Humanoids) 2025}},

ADDRESS = {S{\'e}oul, South Korea},

YEAR = {2025},

MONTH = Sep,

PDF = {https://hal.science/hal-05191852v1/file/IRL_Lifting_final_ICHR2025.pdf},

HAL_ID = {hal-05191852},

HAL_VERSION = {v1},

}

|

|

|

Optimal Motion Prediction for Human-to-Robot Handovers

Maxime Sabbah, Krzysztof Wojciechowski, Harold Soh, David Hsu, Ludovic Righetti, Nicolas Mansard, Bruno Watier, Vincent Bonnet

IEEE 21st International Conference on Robotics and Biomimetics (ROBIO) 2025, (Oral Best Conference Paper Award Finalist)

webpage |

pdf |

abstract |

bibtex |

code |

HAL

Seamless human-robot handovers require precision, timing, and safety. In the absence of visual feedback for humans, robots rely on accurately estimating and predicting their motion. In this work, a real-time human motion prediction and estimation framework for human-to-robot handovers relying on a planar biomechanical model and cost functions extracted from the motor control literature is proposed. Thanks to inverse reinforcement learning, it is possible to iteratively determine the optimal weighting of these cost functions by solving a direct optimal control problem for reaching tasks. An affordable, markerless human pose estimation pipeline was used to estimate in real-time and predict the human arm motion. These predictions were then integrated into a model predictive controller for a seven-degree-of-freedom robot manipulator, successfully intercepting participants' hands in 88.6 ± 8.0% of trials, 0.63s before they reached their intended final hand pose. Experimental validation with blindfolded participants resulted in a predicted joint angle error of 8.7±4.6deg during handover trials. The proposed framework offers a promising solution for safe and effective human-to-robot handovers, particularly for applications involving visually impaired users.

@inproceedings{sabbah2025optimal,

TITLE={Optimal Motion Prediction for Human-to-Robot Handovers},

AUTHOR={Sabbah, Maxime and Wojciechowski, Krzysztof and Soh, Harold and Hsu, David and Righetti, Ludovic and Mansard, Nicolas and Watier, Bruno and Bonnet, Vincent},

URL = {https://hal.science/hal-05191852},

BOOKTITLE = {{IEEE International Conference on Robotics and Biomimetics (ROBIO) 2025}},

ADDRESS = {Chengdu, China},

YEAR = {2025},

MONTH = Dec,

PDF = {https://hal.science/hal-04970974v1/preview/Optimal_Motion_Prediction_for_Human_To_Robot_Handovers.pdf},

HAL_ID = {hal-04970974},

HAL_VERSION = {v1},

}

|

|

|

Concurrent Validity of Embedded Solutions for Whole Body Dynamics Analysis

Maxime Sabbah, Bruno Watier, Raphael Dumas, Maxime Gautier, Vincent Bonnet

Published in IEEE Sensors Journal 2024

webpage |

pdf |

abstract |

bibtex |

HAL

This study investigates the possibility of estimating body segment inertial parameters (BSIP) and performing human dynamics analysis using embedded sensors. Affordable embedded inertial measurement units and instrumented force-sensing insoles have not yet demonstrated sufficient accuracy for dynamic assessments of motions in sports or rehabilitation tasks when compared to laboratory-grade solutions, such as marker based stereophotogrammetric systems and force plates. In this paper, we developed a BSIP identification pipeline to estimate inertial parameters among ten healthy young volunteers. Once these parameters are properly identified, several comparisons can be made. First, for the external wrench, we compare estimates derived from different kinematic modalities coupled with either identified BSIP or anthropometric tables against force plate measurements. Second, for joint torques, we compare estimates using embedded kinematics and either identified BSIP or anthropometric tables to the best available reference, which comes from marker-based stereophotogrammetric systems combined with identified BSIP. For validation, in the Y-Balance postural test, comparing external wrench estimates using kinematics from embedded inertial measurement units and identified BSIP to force plate measurements revealed a root mean square error of 5.9N for forces and 18.0N.m for moments, which corresponds to a large center of pressure position error of 3.2cm. Overall, using identified BSIP reduced the normalized root mean square error for joint torques by 6.5% compared to using anthropometric tables, suggesting that kinematic errors from embedded inertial measurement units cannot be entirely compensated.

@article{sabbah2024sensors,

title={Concurrent validity of embedded solutions for whole body dynamics analysis},

author={Sabbah, Maxime and Watier, Bruno and Dumas, Raphael and Gautier, Maxime and Bonnet, Vincent},

journal={IEEE Sensors Journal},

year={2024},

publisher={IEEE}

}

|

|

|

Lower Limbs Human Motion Estimation From Sparse Multi-Modal Measurements

Mohamed Adjel, Maxime Sabbah, Raphaël Dumas, Marta Mirkov, Nicolas Mansard, Samer Mohammed, Vincent Bonnet

IEEE RAS EMBS 10th Int. Conf. on Biomedical Robotics and Biomechatronics, Sep 2024, Heidelberg, Germany

webpage |

pdf |

abstract |

bibtex |

HAL

This study aimed at the estimation of the 3D lower-limb joint kinematics during a sit-to-stand and a squat exercises using a new affordable motion capture system. Utilizing a reduced number of affordable visual inertial measurement units and markerless data, the study investigates the performance of these modalities in comparison to a reference stereophotogrammetric system. Indeed, markerless data are easily accessible from an RGB image, but few studies investigated their accuracy to perform inverse kinematics for rehabilitation exercise. Thus, ankle, knee, and hip joint center positions and joint angles were obtained through a novel sliding windows inverse kinematics algorithm. Joint angles were estimated with an average error of 8.1deg when inertial and visual data were used and 13.4deg when using solely markerless data. Joint center positions also displayed an estimation error reduced by 2.5 times when using the proposed approach over purely markerless data. These results, associated with the real affordability and ease of use of the proposed system open the door to future field applications in both rehabilitation and sport.

@inproceedings{adjel2024biorob,

TITLE = {{Lower Limbs Human Motion Estimation From Sparse Multi-Modal Measurements}},

AUTHOR = {Adjel, Mohamed and Sabbah, Maxime and Dumas, Raphaël and Mirkov, Marta and Mansard, Nicolas and Mohammed, Samer and Bonnet, Vincent},

BOOKTITLE = {{IEEE RAS EMBS 10th International Conference on Biomedical Robotics and Biomechatronics}},

ADDRESS = {Heidelberg, Germany},

YEAR = {2024},

MONTH = Sep,

URL = {https://hal.science/hal-04504752},

PDF = {https://hal.science/hal-04504752v1/file/IEEE_BioRob_HAL.pdf}

}

|

|

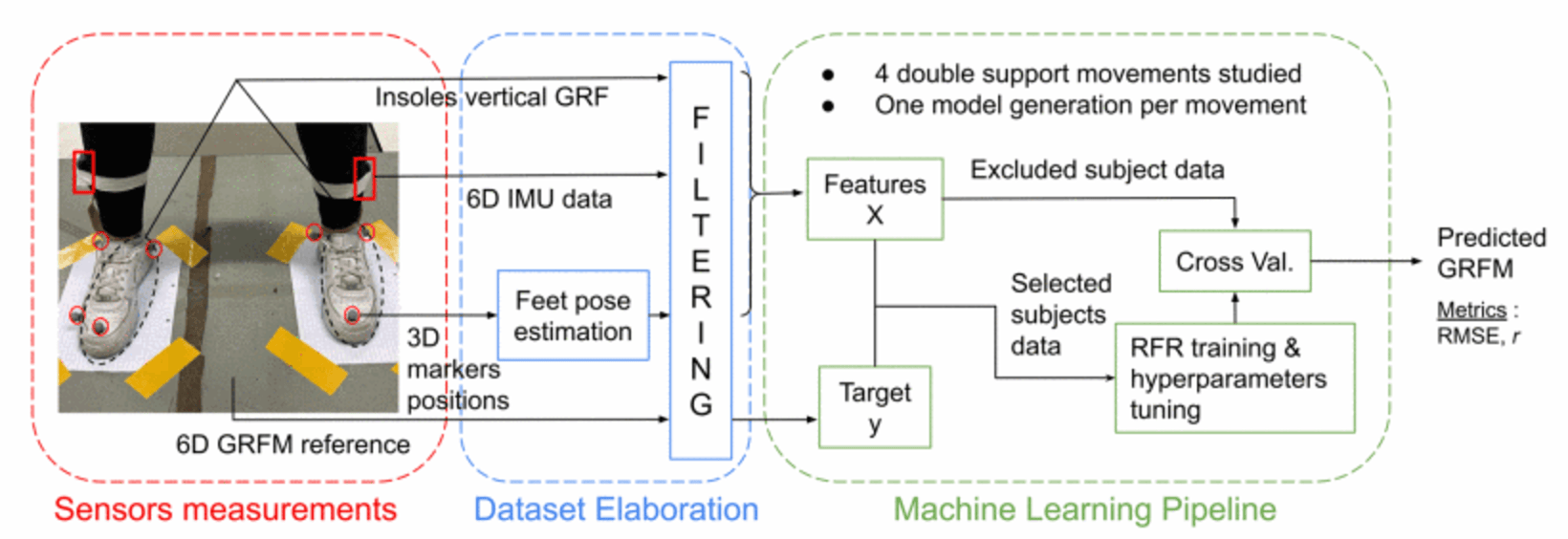

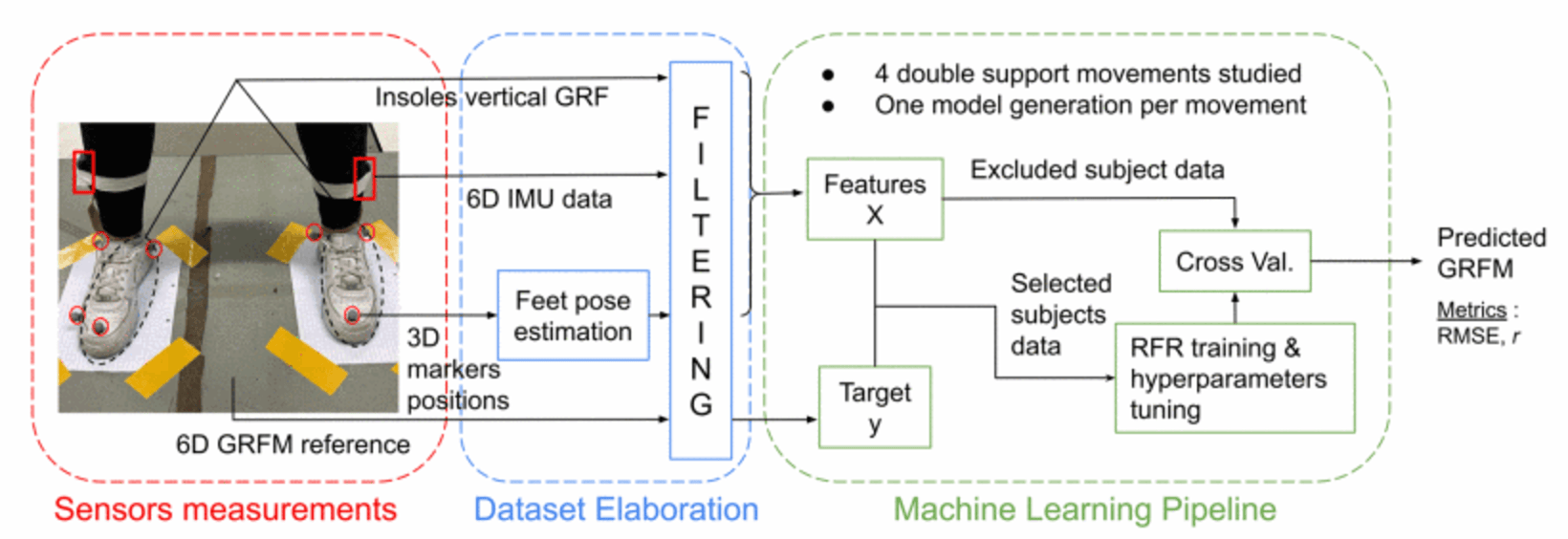

Ground Reaction Forces and Moments Estimation from Embedded Insoles using Machine Learning Regression Models

Maxime Sabbah, Raphaël Dumas, Zoe Pomarat, Lucas Robinet, Mohamed Adjel, Bruno Watier, Vincent Bonnet

IEEE RAS EMBS 10th Int. Conf. on Biomedical Robotics and Biomechatronics, Sep 2024, Heidelberg, Germany, (Oral)

webpage |

pdf |

abstract |

bibtex |

HAL

The objective of this paper was to assess the possibility of estimating 6D ground reaction forces and moments during continuous double supports exercises using instrumented force insoles. Thanks to machine learning regression, the study evaluated the performance of an embedded solution in comparison to a reference laboratory grade force plate. While insoles were validated in the context of gait, few studies investigated their accuracy in estimating ground reaction forces and moments for rehabilitation exercises with both feet on the ground. Thus, popular ankle and hip strategies, squat and hula hoop exercises were investigated. The estimation accuracy was reported with a low average error of 1.6 ± 0.3% of the body weight and 1.2 ± 0.3% of the body weight times the body height along with a moderate correlation when using solely features extracted from insoles measurements. These results demonstrated the possibility of using embedded solutions to estimate the full ground reaction wrench if the learning process was applied for each specific task separately.

@inproceedings{sabbah2024biorob,

title={Ground reaction forces and moments estimation from embedded insoles using machine learning regression models},

author={Sabbah, Maxime and Dumas, Raphael and Pomarat, Zoe and Robinet, Lucas and Adjel, Mohamed and Watier, Bruno and Bonnet, Vincent},

booktitle={2024 10th IEEE RAS/EMBS International Conference for Biomedical Robotics and Biomechatronics (BioRob)},

pages={154--159},

year={2024},

organization={IEEE}

}

|

|

|

FIGAROH: a Python toolbox for dynamic identification and geometric calibration of robots and humans

Dinh Vinh Thanh Nguyen, Vincent Bonnet, Maxime Sabbah, Maxime Gautier, Pierre Fernbach, Florent Lamiraux

IEEE Int. Conf. on Humanoid Robots (Humanoids), Dec 2023, Austin, USA

webpage |

pdf |

abstract |

bibtex |

code |

HAL

The accuracy of the geometric and dynamic models for robots and humans is crucial for simulation, control, and motion analysis. For example, joint torque, which is a function of geometric and dynamic parameters, is a critical variable that heavily impacts the performance of model-based control, or that can motivate a clinical decision after a biomechanical analysis. Fortunately, these models can be identified using extensive works from literature. However, for a non-expert, building an identification model and designing an experimentation plan, which should not require long hours and/or lead to poor results, is not a trivial task, especially for anthropometric structures such as humanoids or humans that need frequent update. In this work, we propose a unified framework for geometric calibration and dynamic identification in the form of a Python open-source toolbox. Besides identification model building and data processing, the toolbox can automatically generate exciting postures and motions to minimize the experimental burden from the robot, measurements, and environment description. The possibilities of this toolbox are exemplified with several datasets of human, humanoid, and serial robots.

@inproceedings{nguyen2023figaroh,

title={FIGAROH: a Python toolbox for dynamic identification and geometric calibration of robots and humans},

author={Nguyen, Thanh DV and Bonnet, Vincent and Sabbah, Maxime and Gautier, Maxime and Fernbach, Pierre and Lamiraux, Florent},

booktitle={2023 IEEE-RAS 22nd International Conference on Humanoid Robots (Humanoids)},

pages={1--8},

year={2023},

organization={IEEE}

}

|

|

|

Multi-Modal Upper Limbs Human Motion Estimation from a Reduced Set of Affordable Sensors

Mohamed Adjel, Maxime Sabbah, Raphaël Dumas, Nicolas Mansard, Samer Mohammed, Bruno Watier, Vincent Bonnet

IEEE Int. Conf. on Intelligent and Robotics Systems (IROS), Oct 2023, Detroit, USA

webpage |

pdf |

abstract |

bibtex |

HAL

This study aims at developing a new affordable motion capture system for human upper limbs' joint kinematics estimation based on a reduced set of visual inertial measurement units coupled with a markerless skeleton tracking algorithm. The markerless skeleton tracking algorithm allows to alleviate the kinematic redundancy that is observed if only a single visual inertial measurement unit is used at the hand level but it introduces undesired outliers. A Sliding Window Inverse Kinematics Algoritm based on a biomechanical model is proposed to filter out outliers. It has the advantage to constrain the evolution of joint kinematics while being able to handle multi- modalities. The proposed system was validated with five healthy volunteers performing a popular rehabilitation pick and place task. Joint angles estimated using our method were compared with the ones obtained using a reference stereophotogrammetric system. The results showed an average root mean square error of 9.7deg along with an average correlation of 0.8. These results compare favorably with literature results obtained with more numerous and relatively costly sensors or more elaborated and expensive markerless systems.

@inproceedings{adjel2023iros,

title={Multi-modal upper limbs human motion estimation from a reduced set of affordable sensors},

author={Adjel, Mohamed and Sabbah, Maxime and Dumas, Raphael and Mansard, Nicolas and Mohammed, Samer and Watier, Bruno and Bonnet, Vincent},

booktitle={2023 IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS)},

pages={10926--10932},

year={2023},

organization={IEEE}

}

|

Journal Service

- IEEE Transactions on Robotics (T-RO) – 2025

- IEEE Robotics and Automation Letters (RA-L) – 2025

|

Conference Service

- IEEE International Conference on Robotics and Automation (ICRA) 2025

- IEEE International Conference on Intelligent Robots and Systems (IROS) 2025

- IEEE International Conference on Humanoid Robots (ICHR) 2024, 2025

- International Conference on Robotics in Alpe-Adria-Danube Region (RAAD) 2025

|

|